Blog

A sneak peek into the world of Data Engineering

What is Data Engineering?

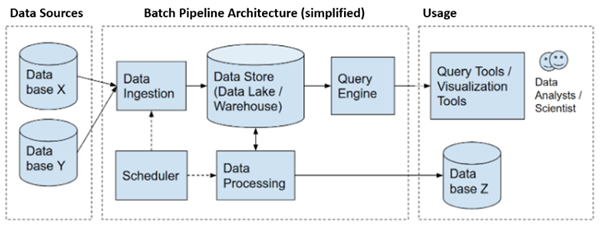

Engineers design and build solutions. Data engineers design and build solutions for data pipelines that transform and transport data into required formats to Data Scientists, Business Analysts, other End Users or to Stakeholders. Before the data can be consumed, it must be shaped into a highly usable state.

These pipelines fetch data from different sources and collect them into a single warehouse that represents the data uniformly as a single source of truth. The data sources can be of different varieties – SQL, NoSQL, Structured, Unstructured, RDBMS, File systems, etc.

How is it done?

Different skills, strategies and tools are used to build, maintain, and scale up the Data pipelines and Data warehouses. These are some of them –

SQL – This is the staple for Data Engineering tasks and expected to remain so for long time.

Data Modelling – This is one of the tasks that is done during the designing phase. It forms the foundation of the Data warehouse and involves knowledge in how to structure tables, where to normalize, denormalize. Having domain expertise is also important as a Data Modeller should know what business attributes are to be used as Grain, Dimensions and Facts.

ETL and ELT – These are two important strategies to design Data pipelines. ETL (Extract Transform and Load) and ELT (Extract Load and Transform) are different approaches used in different situations. Both these have their own suitable use cases. ETL is good for multiple sources Based on scenarios, we can have some more strategies also like ETLT approach.

Data Integration Tools – Also known as ETL tools. These are tools following low code/ no code approach. These are used in creating the Data pipelines. A project is divided into many jobs and each job has many components. The programming is done using GUI with the help of components. The tools provide components with functionalities to perform operations in simpler ways that otherwise would have been complicated/lengthier using programming languages. Each job has a clearly defined purpose.

These tools provide many “Data-centric” and “Pipeline-centric” features. Informatica, Talend, ODI (Oracle product), SSIS (Microsoft product) are some leaders in ETL tools arena.

Data Quality and Validation – ETL testing along with Database testing is another important skill under the Data Engineering umbrella. It ensures the quality of the primary thing – the data. The raw data from the sources pass through many stages and transformations before it is presented to the relevant data consumers. So, the ETL testing is done to make sure the data thus transformed is accurate. Depending upon the complexity of the project, ETL Testing cane be performed in various stages of the pipeline. There are some products also for automating the ETL Testing.

Afterall, Why is it done?

Data Engineering is not a part of the applications that perform or help in performing the Operations of an organization. Then why do we need Data Engineering? Data Engineering done on Operations data can give deep insights to major and minor parameters of an organization and their customers. In other words, today’s Business Intelligence has its foundation in Data Engineering. Data Engineering gives the picture of current health of an organization. It can also forecast the health of organization. This helps the managements to take better and informed decisions “based on Data” for better results in every possible area. Apart from determining an organization’s health, Data Engineers provide data to Data Scientists for further analysis to find solutions to problems for growth in existing area and new areas.

Elasticsearch, Logstash, Kibana (ELK Stack)

Tumlare Software Services Pvt. Ltd. (TSS) developed an in-house solution by combining three different technologies: Elasticsearch, Logstash server, and Kibana / ELK stack, to ingest raw legacy data into a search engine, making data easily accessible and consumable via APIs (Application Program Interfaces) in JSON file structure, and digitally transformed to integrate with any other platform that wants to use operation data.

Elasticsearch is a distributed, free and open search and analytics engine for all types of data, including textual, numerical, geospatial, structured, and unstructured. Elasticsearch is built on Apache Lucene and was first released in 2010 by Elasticsearch N.V. (now known as Elastic). Known for its simple REST APIs, distributed nature, speed, and scalability, Elasticsearch is the central component of the Elastic Stack, a set of free and open tools for data ingestion, enrichment, storage, analysis, and visualization. Commonly referred to as the ELK Stack (after Elasticsearch, Logstash, and Kibana), the Elastic Stack now includes a rich collection of lightweight shipping agents known as Beats for sending data to Elasticsearch.

Raw data flows intoElasticsearch from a variety of sources, including legacy applications ,logs, system metrics, and web applications. Data ingestion is the process by which this raw data is parsed, normalized, and enriched before it is indexed in Elasticsearch. Once indexed in Elasticsearch, users can run complex queries against their data and use aggregations to retrieve complex summaries of their data. From Kibana, users can create powerful visualizations of their data, share dashboards, and manage the Elastic Stack.

The speed and scalability of Elasticsearch and its ability to index many types of content mean that it can be used for a number of use cases:

Application search

Website search

Enterprise search

Logging and log analytics

Infrastructure metrics and container monitoring

Application performance monitoring

Geospatial data analysis and visualization

Security analytics

Business analytics

Logstash, one of the core products of theElastic Stack, is used to aggregate and process data and send it to Elasticsearch. Logstash is an open source, server-side data processing pipeline that enables you to ingest data from multiple sources simultaneously and enrich and transform it before it is indexed into Elasticsearch.

Kibana is a data visualization and management tool for Elasticsearch that provides real-time histograms, line graphs, pie charts, and maps. Kibana also includes advanced applications such as Canvas, which allows users to create custom dynamic infographics based on their data, and Elastic Maps for visualizing geospatial data.

Elastic recognized as a Challenger in the 2021 Gartner Magic Quadrant for Insight Engines

https://www.elastic.co/blog/elastic-recognized-as-a-challenger-in-the-2021-gartner-magic-quadrant-for-insight-engines

How JAVA evolved over the years

The history of Java is very interesting. Java is invented by James Gosling, Patrick Naughton, Chris Warth, Ed Frank, and Mike Sheridan at Sun Microsystems, Inc. in 1991. Interesting fact about Java is – “Gosling had an Oak tree just outside the office and hence the language was initially named as Oak. Later, it was renamed to Green and finally Java, from Java Coffee”.

The basic idea behind creating this language was to create a platform-independent language that is used to develop software for consumer electronic devices such as microwave ovens, remote controls, etc.

Java solves the Security and the portability issue of the other language that is being used. The key that allows doing so is the Byte code. Byte code is a highly optimized set of instruction that is designed to be executed by the Java Virtual Machine (JVM).

The characters of Java is inherited from C and C++ language. It took approximately Eighteen months to develop the first working version and it was released in 1995. Time magazine called Java one of the Ten Best Products of 1995.

Initially, Java was not designed for Internet applications. After that, Java had an extreme effect on the Internet by the innovation of a new type of networked program called the Applet. And currently, Java is used in internet programming, mobile devices, games, e-business solutions, etc.

The acquisition of Sun Microsystems by Oracle Corporation was completed on January 27, 2010 and since then, there have been a number of positive changes. New versions are released every few years, with the most recent, Java SE 17 launched in September 2021.

HCL AppDev Pack for Domino is here!

Tumlare Software Services Pvt. Ltd. (TSS) has a legacy of Domino systems for over 20 years. The Tour Operation systems on Domino platform are the backbone of the company and propelling the business for these so many years without any fuss and in low cost model.

Let’s talk more about this technology which as enabled TSS to seamlessly enable the Domino systems to connect to many different company portals (Apps) and periphery systems over digital platforms.

The AppDev Pack is an add-on for HCL Domino that lets users connect Domino applications with Node.js projects and create new applications that span both worlds.

HCL announced AppDev pack first in Domino Server V10. With initial release and later fixes, it also has come to an age. TSS is currently using the Domino AppDev Pack 1.0.9.

The AppDev Pack primarily adds Node.js support to HCL Domino Server. It includes these components:

• The Proton Domino server add-in task. An administrator installs and configures Proton on one or more Domino servers. If you are a Domino administrator, start with the Administration Overview page.

• The @domino/domino-db Node.js module. A developer uses this module from a Node.js application. This module uses Proton to perform operations on documents in a server database. If you are a developer, start with the API Documentation page.

• The com.hcl.domino.db Java API. A developer uses this API from a Java application. The API uses Proton to perform operations on documents in a server database. If you are a developer, start with the java-docs jar provided in AppDev pack kit.

• The Node.js based Identity and Access Management service, IAM. An administrator deploys IAM aside Domino. For more information, start with the IAM Overview page. A developer uses the IAM client library in their Node.js application to build applications that access Domino resources through:

o RESTFul APIs with standard OAuth 2.0 authorization flows

o Act-as-User requests in @domino/domino-db

What’s New in Release 1.0.9

Release 1.0.9 includes the following changes:

• IAM Server

o CSS of IAM server user login/grant pages slightly tweaked.

o Install instructions changed slightly. Use npm install vs npm ci.

• Export DXL

o Design elements and other non-data documents can be exported selectively from a database.

o See exportDXL for Node.js API

o See exportDXL for Java API

o Added support for actAsUser tokens

• Get the ACL entries and roles of the Domino database

o See getACL for Node.js API

o See getACL for Java API

• Update the ACL entries and roles of the Domino database

o See editACL for Node.js API

o See editACL for Java API

• Replace the ACL entries and roles of the Domino database

o See setACL for Node.js API

o See setACL for Java API

• Get Domino file system entries

o See getFileSystemList for Node.js API

o See getFileSystemList for Java API

• New setup tooling for Proton and the domino-db clients that is based on the Domino 12 Certificate Store. Requires Domino 12. See here

for more details.

For more details, see the AppDev Pack for Domino documentation

BI Important Trends that will drive future of any business

Need for Business Intelligence now is more than ever, with invent of new technologies and cloud platforms it has become much more cheap and easier for companies to scale up their businesses and capture tons of data out of business processes.

Traditionally BI has played a vital role in providing businesses insight into the performance of the company, but in today’s competitive world where companies are investing heavily in data and analytics would need more than a just reporting.

With recent survey by BARC’s on Data, BI and Analytics shows multiple trends which are driving the market, what I believe following few trends would deeply impact the future of the BI and every company should incorporate these to get ready for the future:

MD/DQ Management:

Master Data and Data quality management is the top most trend that has been very significant in past will remain significant in future as well. Garbage in garbage out, as it says without good quality of data it would be really difficult for business to make good decision.

Good quality should be driven by the good data governance and strong business processes, business should build good processes within the system as well as in BI system to ensure the integrity of the data.

Master Data Management become very important, if master data kept in silos for each department/system then each unit will have its own definition of master and would lead to difficulty in presenting unified views on data. So Master data management would ensure the consistency on master data across the different systems in company.

Data Discover and visualization:

Visualization is need of the hour, Information presented in visual form are much easier and quicker for human being to consume. It also gives the possibility to present many insights together which could lead any business to better decision making. But It’s not just the good visualization in report or in dashboards that would drive the future but the added capability for business to drill down to the very core of the information or problem.

Data driven culture:

In today’s competitive world the decision are needs be made at every level in business hierarchy from top management to sales/operation person. Better data driven culture would eventually lead to a better and informed decision, Not everyone in any organization is comfortable with the BI, so business should enforce the use of BI at every level and should be supported by proper training and guidance.

Machine Learning/Advance analytics:

In today’s time every major IT giant is adopting the ML and Deep Learning to drive business and maintain the customer acquisition. Historical data is always good to make Descriptive and Diagnostic analytics and drive some decision out of it, but human brain has a limitation in terms of how much information it can consume and make the decisions. Predictive analytics is very good way to find the patterns within the data and forecast the possibility, which can help any business to make quick decision and build future strategy.

Agile BI development:

Gone are days when BI solution use to take months to build a solution, with the quick adoption of Agile methodology in software development and its success, it has pave the way to rethink the BI solution in terms of agile practices. Agile BI development would help the BI team and business to get the quick wins on long haul requirements.

Conclusion

Even companies which are not in direct internet based business are using data to improve their operation efficiency and ultimately gaining edge over others. BI new trends would play a vital role in providing business with capability to make good decisions. This war of dominance will belongs to one who would make good use of the data and technology.

Crisis and Innovation

COVID crisis forced us to adapt. Luckily, we are in IT industry, so the move to work from home has been a rather seamless one. But it’s been impressive journey so far in TSS in terms of how quickly we adopt to the situation and support business in this difficult time.

While this is a difficult time for everyone, it’s important to note that we’re all in this together. We’re responsible for more than simply our own well-being, and what this has proven to me is that we’re capable of so much more.

We had seen in history in the times of great crisis resilient people and leaders stood up and take charge. It’s not only for themselves but unified people around a cause.

“Innovation is the ability to see change as an opportunity – not a threat”. – Steve Jobs

Quote is quite relevant, if we see current crisis as a new change then we can see opportunity it brings. Crisis present us with unique conditions that allow to think and move more freely to create rapid, impactful changes. The heart of innovation is that they will solve problems and be driven by the intensely human desire to help, to connect with other people, and be part of the solution when things get hard.

In a time of crisis, innovation is more important than ever – so, problem solving, resourceful thinking and community consciousness are critical. And we saw this in every crisis there was evolution in medicine, science and technology and in other fields as well. Foundations were laid for years of innovation and perspective gave people a reason to think forward. A crisis forces people to see the new perspective because it break the assumptions on which everyday life proceeds and so creates a doorway into a different kind of world, one in which people can improvise new solutions inspired by generosity and empathy, goodwill, resilience and resourcefulness often lacking in normal times. Factors that can foster innovation in crisis would be like – singular focus, urgent timelines, openness to collaboration, scalability, repurposing of resources, flexibility. Bringing all these factors together in a reimagination team is a powerful mechanism to make innovation happen.

In the end, I must say brighter days are coming and its indubitable it’s just as we keep our eye towards the future and capitalize the time and resources.